Human-Centered AI: Why Context Beats Code in Business Adoption

Direct Answer:

Human-Centered AI (HCAI) is a design philosophy that prioritizes human needs, agency, and trust over pure automation. Instead of asking "What can this technology do?", HCAI asks "How does this tool help people do their work better?" Organizations that treat AI as a cultural change—not just a technical upgrade—see higher adoption, trust, and ROI.

TL;DR

Human-Centered AI puts people first: workers remain decision-makers while AI augments (not replaces) their judgment

Efficiency alone is a red flag—success requires balancing algorithmic power with user trust and explainability

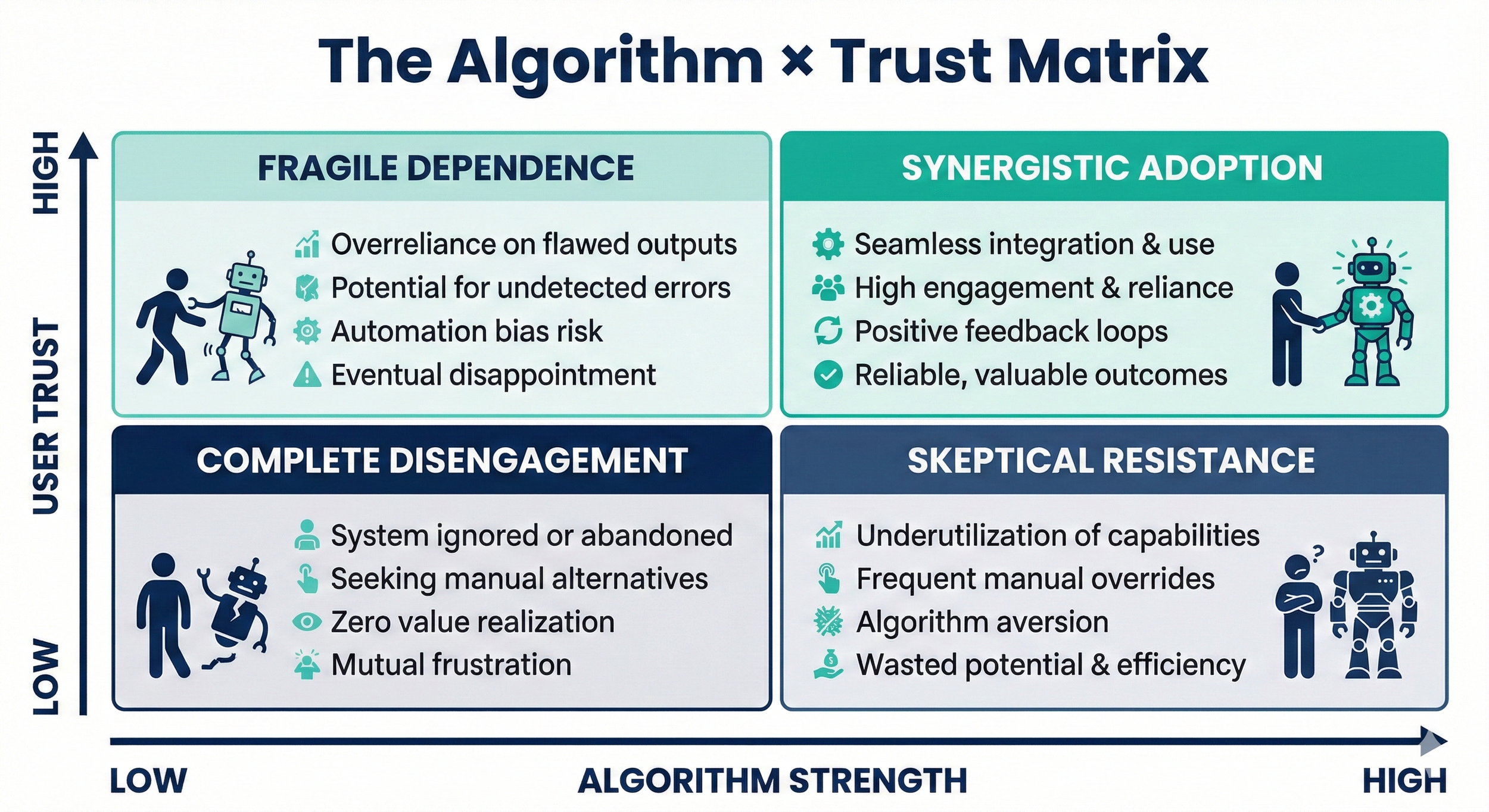

The matrix: Algorithm × Trust—high performance without trust leads to failed rollouts; trust without capability yields low-value tools

Three pillars: Explainability (users understand AI reasoning), Agency (humans retain final say), and Workflow Integration (redesign processes around AI, don't bolt it on)

What Is Human-Centered AI?

Human-Centered AI is a framework that embeds artificial intelligence into workflows while preserving human oversight, comprehension, and control. The National Institute of Standards and Technology (NIST) defines HCAI as systems designed to "enhance human capabilities and decision-making" rather than operate autonomously without accountability.

The philosophy flips the traditional question. Instead of "What can this machine do?", organizations ask: "How does this machine help us do what we're already doing—but better?"

This distinction matters because it reframes AI as a sociotechnical system, not just a software deployment. As Stanford HAI researchers note, "Technology is not neutral; its impact depends on how it's integrated into human processes."

Why "Efficiency" Is a Red Flag

Many leaders measure AI success by time saved or headcount reduced. But focusing solely on efficiency creates what we call the Executive-Worker Gap:

Executives see AI as a synthesis engine that generates reports in seconds

Frontline workers experience AI as a black box requiring hours of prompt tuning and fact-checking

If workers don't trust the tool, they won't use it—they'll route around it. A 2024 McKinsey survey found that 58% of employees cited "lack of explainability" as a barrier to AI adoption, even when tools promised efficiency gains.

The problem: Efficiency is too vague. It's like naming a database "Stuff." You need specificity; what kind of efficiency, measured against what baseline, with what quality threshold?

The Algorithm × Trust Matrix

Successful HCAI deployments balance two axes: Algorithm strength and User Trust. See the below infographic to understand their relationship and impact towards organizational AI transformation.

To land in synergistic adoption, organizations must design for:

1. Explainability

Users must understand why the AI made a recommendation. If a loan application is flagged, can the underwriter see which data points triggered the decision? Research from the EU AI Act emphasizes that high-risk AI systems must provide "meaningful information" about their logic.

Example: A credit risk model that shows "Debt-to-income ratio 52% (threshold: 45%)" beats one that says "High risk—denied."

2. Agency (Human-in-the-Loop)

The AI drafts; the human decides. This design pattern, often called Human-in-the-Loop (HITL), ensures accountability and catches errors that automated systems miss.

Example: A customer service chatbot suggests three responses. The agent selects one, edits it, and sends—preserving judgment and tone control.

3. Workflow Integration

Don't bolt AI onto existing processes. Redesign the workflow around the new capability. Gartner's 2024 AI Strategy report warns that 70% of AI projects fail due to poor change management, not technical flaws.

Before AI: Sales rep manually enters lead data → Manager reviews weekly → Report generated monthly

After HCAI: AI extracts data from emails in real-time → Rep verifies and enriches → Manager sees live dashboard with anomaly alerts

Automation vs. Augmentation

Human-Centered AI leans toward augmentation over automation:

Automation = replacing human tasks (data entry, sorting, rule-based triage)

Augmentation = enhancing human judgment (research synthesis, scenario modeling, creative drafting)

Think of it as pilot and autopilot. The human remains in command; the AI handles altitude, speed, and routine adjustments. The pilot still lands the plane.

Humans are the pilot, and AI is your partner making the journey easier!

What Success Looks Like

A successful HCAI rollout delivers:

Team empowerment: Workers feel super-powered, not surveilled or replaced

Measurable outcomes: Not just "time saved," but quality improvements, faster decision cycles, or higher win rates

Sustained usage: Adoption rates remain high 6–12 months post-launch

According to MIT Sloan's research on AI adoption, companies with strong "AI literacy programs" and change management see 3.5× higher ROI than those focused on technology alone.

Common Pitfalls

Mistake: Treating AI as a "magic wand"

Why It Fails:

Workers see it as unreliable

Skipping training and onboarding

Users don't know how to prompt or verify outputs

Ignoring feedback loops

Tool doesn't improve with use

Fix:

Set realistic expectations; show limitations upfront

Invest in contextual training (not generic webinars)

Build mechanisms for workers to flag errors and suggest refinements

FAQ

Q: What's the difference between Human-Centered AI and Responsible AI?

A: Responsible AI focuses on ethics, fairness, and governance (preventing harm). Human-Centered AI focuses on usability and value delivery (helping people work better). They overlap but serve different goals. Think of Responsible AI as the guardrails; HCAI is the steering wheel.

Q: Can HCAI work in high-speed, automated environments like trading or manufacturing?

A: Yes, but the "human-in-the-loop" may shift to human-on-the-loop—oversight at a system level rather than every transaction. The key is preserving explainability and the ability to intervene when anomalies occur.

Q: How do I measure trust in an AI tool?

A: Track actual usage vs. mandated usage. If you require the tool but see workarounds (copy-pasting into old systems, manual double-checks), trust is low. Surveys asking "Would you use this if it weren't required?" also reveal trust gaps.

Q: Is HCAI only for large enterprises?

A: No. Even small teams benefit from asking "How does this help my workflow?" before adopting AI. The principles scale, whether you're a 5-person startup or a 5,000-employee corporation.

Q: What if my team resists AI entirely?

A: Start small. Pick one painful task (e.g., meeting transcription) and show quick wins. Build trust incrementally. Resistance often stems from fear of replacement or past tech failures, address those directly.